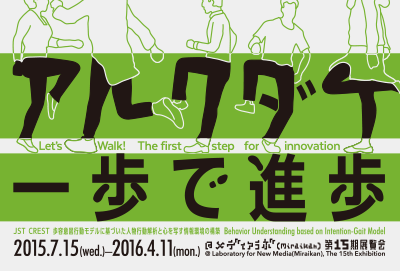

Laboratory for New Media 15th Exhibition

“Let's Walk! The first step for innovation”

Laboratory for New Media Permanent Exhibition periodically updates contents of exhibitions to introduce the various possibilities of expression provided by information science and technology.

Laboratory for New Media Permanent Exhibition periodically updates contents of exhibitions to introduce the various possibilities of expression provided by information science and technology.

Everyone walks differently in their own unique manner, from the way they swing their arms, to their posture, to the length of their strides. With two interactive activities in this exhibit, you can experience technology to identify individuals and estimate age based on individual quirks in the way a person walk. This technology is being researched as part of the JST CREST “Behavior Understanding based on Intention-Gait Model” project (Research Director: Yasushi Yagi, Ph.D.). It performs a mathematical analysis of videos of people walking, and determines their characteristics.

This is an ongoing research project. Each and every step that you take is accumulated as research data and utilized for the progress of research and development. Come and walk with us to learn about the information environment of the future, where individuals can be identified by their walk.

Evaluation of cognitive ability, just from walking

While walking in place on top of a square mat, you will respond to a quiz as the questions are displayed on a monitor in front of you. Your walking style while completing the quiz is shot using a camera, and your ability to carry out the two simultaneous tasks (walking, and responding) is analyzed. The health of your brain is then assessed based on this data.

While walking in place on top of a square mat, you will respond to a quiz as the questions are displayed on a monitor in front of you. Your walking style while completing the quiz is shot using a camera, and your ability to carry out the two simultaneous tasks (walking, and responding) is analyzed. The health of your brain is then assessed based on this data.

As you age and your walking ability declines, the risk of dementia is said to increase. This technology is expected to be applied as a new form of health management method in evaluating the risk of dementia or the effects of rehabilitation simply by filming a person’s movements.

Inventors: Fumio Okura, Masataka Niwa, Ikuhisa Mitsugami, Yasushi Yagi

Evaluation of gait personality, just from walking

Try walking straight along the green walking path. Even when just walking casually without paying attention to how you are walking, your walking style contains characteristics in your arm swing, walking speed, stride length and so on.

Try walking straight along the green walking path. Even when just walking casually without paying attention to how you are walking, your walking style contains characteristics in your arm swing, walking speed, stride length and so on.

The walking motion is a full-body movement that involves repeating the right and left paces. By filming a person’s gait with a camera, creating a consecutive silhouette images, and analyzing that repetitive movement, it is possible to understand the characteristics of the person’s walking style. Furthermore, by comparing this with the data on walking styles for more than a thousand people collected from previous research and this exhibit, your “walking age” can be deduced. Not only age, more information of individuals will be deduced by this technology as research data on walking styles is accumulated through this exhibit.

Inventors: Mayu Okumura, Haruyuki Iwama, Chihiro Aoki, Takuhiro Kimura, Yasushi Makihara, Yasushi Yagi

Forensics analysis, just from walking

There is a growing number of cases where criminals are tracked and subsequently arrested, using images shot by security cameras set up in the city. However, it becomes extremely difficult to identify criminals if their faces are obscured by helmets or masks.

There is a growing number of cases where criminals are tracked and subsequently arrested, using images shot by security cameras set up in the city. However, it becomes extremely difficult to identify criminals if their faces are obscured by helmets or masks.

This is the first system in the world that is capable of comparing images of a criminal walking at the scene of the crime with images of the suspected criminal walking at a different location, and then determining if they are the same person. If individuals could be identified based on their walking styles, it would be possible to identify criminals even if their facial appearance were not clearly known. In fact, this method has contributed to identify criminals in a case of attempted arson. In the future, it is expected to play an important role in arresting criminals through its application in a wide variety of criminal investigation activities.

Inventors: Haruyuki Iwama, Takuhiro Kimura, Daigo Muramatsu, Yasushi Makihara, Yasushi Yagi

| Term | July 15 (Wed.), 2015 - April 11 (Mon.), 2016 |

|---|---|

| Exhibitor | JST CREST Project “Behavior Understanding based on Intention-Gait Model”

|